Stop guessing from CVs, measure skills objectively instead

The most reliable hiring decisions are built on structured, job-relevant skill measurement. Grounded in evidence, designed for fairness, and validated for predictive performance. Not inferred from subjective CV narratives or imperfect parsing systems.

Predictive and fair selection, grounded in evidence

How structured, job-relevant methods predict performance and improve fairness.

Reliability and consistency by design

How we design scoring systems that are consistent, reproducible, and decision-ready.

Continuous fairness and adverse impact monitoring

How we test, track, and govern outcomes to reduce bias over time.

What CVs can and cannot tell you

CVs provide context about someone’s career history. They do not provide structured evidence of capability. This makes screening subjective and structurally risky.

CV filtering is not neutral:

CVs optimize for storytelling and rely on noisy and unequal proxies (experience and education), producing inconsistent decisions over time.

82% of HR leaders admit their systems accidentally screen out qualified candidates (SHRM, 2024).

Automated filters disproportionately impact career returners, caregivers, career switchers, and international talent.

False negatives increase time-to-fill and drive mis-hire costs: estimated at ≈ $76K per bad hire (CareerBuilder, 2017 survey).

The hidden cost of CV and ATS screening

Most CV screening today relies on keyword matching and document parsing

As AI-generated CVs become more common and more uniform, keyword filtering becomes even less reliable, increasing both false negatives and missed talent. Parsing documents is not the same as measuring skills.

70%

of qualified applicants are filtered out by automated rules (Harvard Business School & Accenture, 2021).

70 to 88%

is the CV parsing accuracy ranges, meaning up to 30% of content may be lost or misinterpreted (Jobscan, Sovren, Affinda).

99%

of Fortune 500 companies use ATS systems, making these limitations systemic.

AI-generated CVs amplify the noise

When every résumé is optimized, differentiation disappears

AI tools produce formulaic, keyword-heavy CVs that increasingly look the same.

Design-heavy or visually enhanced formats often break or confuse parsing systems.

One-click applications increase volume; forcing recruiters to tighten filters and reject even more qualified talent.

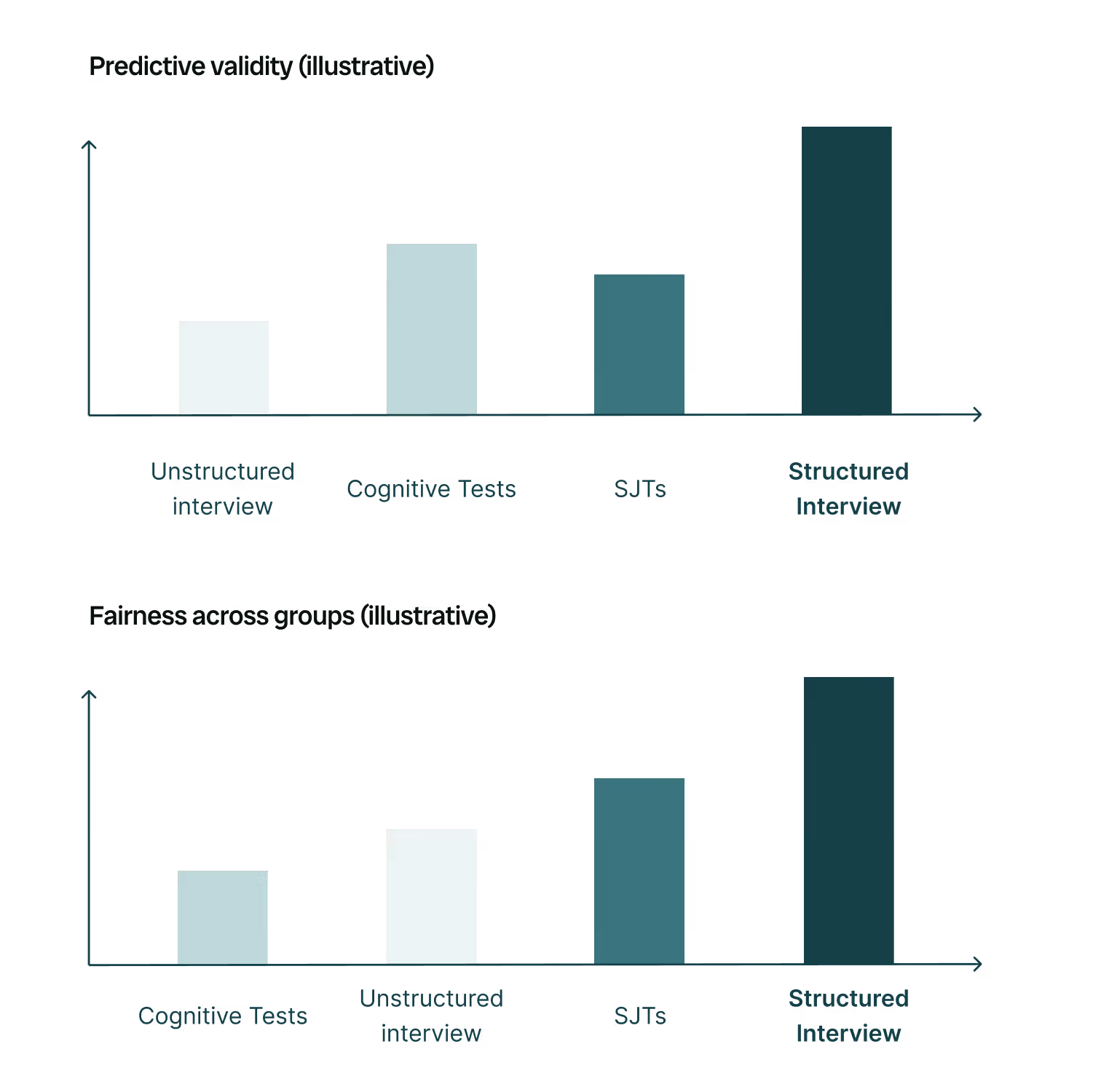

Why skills-based selection is more predictive and fair

Structured, job-relevant skill assessments outperform unstructured screening by measuring the capabilities that truly drive performance. When document parsing discards up to 70% of qualified people, the solution is not better parsing, it is better measurement.

Research shows job-specific, behaviorally grounded methods are both more predictive and more equitable; structured interviews and situational judgement tests are strong examples (Sackett et al., 2023).

This is the core design principle behind Maki’s assessments: structured tasks, observable evidence, defensible scoring.

Why experience alone can mislead hiring decisions

Past roles can bring useful knowledge; they can also introduce rigidities that limit performance in a new environment.

Prior experience can add value when prior roles are similar because people bring transferable knowledge and skills.

The benefit fades as people gain organisation-specific experience in the new context.

Experience can also carry rigidities; cognitive and behavioral habits from past roles can hinder performance, and those costs can persist.

Whether experience helps or hurts depends on individual attributes like adaptability and cultural fit.

What “skill” means at Maki

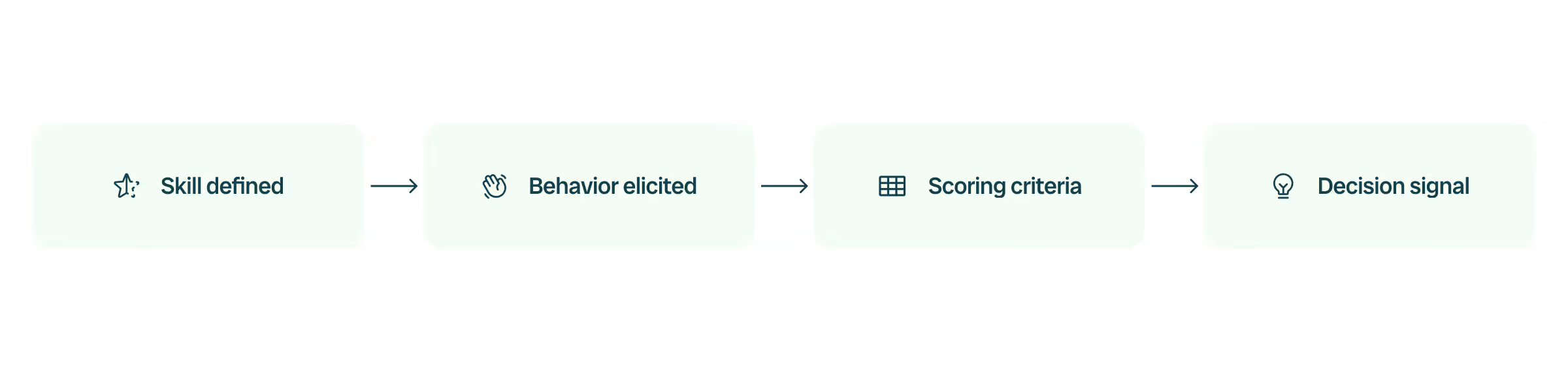

Skills defined before they are measured

Every assessment starts with clearly defined job-relevant constructs, evaluated through structured tasks that generate observable evidence and decision-ready signals.

Designed tasks that reveal real capability

Candidates complete structured prompts, scenarios, and activities built to elicit job-relevant behavior, not self-presentation or keyword matching.

Decision-ready, explainable signals

Scores are tied to observable evidence and defined criteria; enabling clear, defensible hiring decisions rather than intuition or black-box rankings.

Why Maki assessments are reliable ways to measure skills

Defined constructs before measurement

Every assessment starts with clearly defined, job-relevant capabilities; not proxies like experience, education, or pedigree.

Healthy score distributions that support real decisions

Assessments are designed to meaningfully differentiate candidates; enabling defensible cut-scores and scalable hiring.

Observable, explainable evidence

Scores are tied to structured tasks and explicit criteria; not opaque inference or black-box modeling.

Fairness and validity built into the system

Skill models are calibrated, validated, and monitored to ensure they measure what they intend to measure; equitably and defensibly.

Reliability as a release requirement

Results must be consistent and reproducible; with strong alignment between AI scoring and expert human judgment before deployment.

How Maki delivers skills-based hiring in practice

From early screening to in-depth evaluation, Maki operationalizes structured skill measurement across the entire hiring funnel.

Shiro: early funnel skills screening

Mochi: structured conversational screening

Ken: deeper evaluation for high-stakes roles

Security

Technology & trust

From compliance certifications to bias audits and AI Act readiness, Maki is built on transparency, safety, and fairness.

Bias-audited AI

Explainable scoring

Ethical AI standards